Blog

From 68 Alerts to Zero: How I Built a Self-Healing Homelab in a Weekend

Table of Contents

I ran an audit on a Friday night. A script crawled every host, checked every service, tested every backup, and spit out a report. 68 gaps. Missing health checks. Silent failures. Services running without monitoring. Backups configured but never verified.

Sixty-eight things that could break without anyone noticing.

By Sunday night, the number was zero. Not because I fixed 68 things manually — I’m not that patient — but because I built the automation to monitor and remediate all of them. Two days of focused work replaced what would have been months of 3 AM SSH sessions.

The audit that started everything

The idea came from a simple question: what’s actually being monitored? I had Prometheus running, Grafana dashboards looking pretty, a handful of alert rules. It felt comprehensive. It wasn’t.

I wrote a gap analysis that checked every host against a baseline: is node_exporter running? Are there alert rules for disk, CPU, memory, service health? Are backups configured? Are backup integrity checks scheduled? Is the remediation bridge watching for failures?

The results were humbling. Out of 12 hosts, only 4 had complete monitoring coverage. Three hosts had no alerting at all. My DNS server — the single most critical piece of infrastructure — had metrics collection but zero alert rules. If BIND9 went down at 2 AM, I’d find out when nothing resolved in the morning.

# gap_analysis.py — the script that ruined my Friday

def audit_host(host):

gaps = []

if not check_node_exporter(host):

gaps.append("node_exporter not deployed")

if not check_alert_rules(host, REQUIRED_RULES):

gaps.append(f"missing alert rules: {missing}")

if host in BACKUP_HOSTS and not check_restic_config(host):

gaps.append("restic backup not configured")

if not check_remediation_mapping(host):

gaps.append("no remediation playbooks mapped")

return gaps

total_gaps = sum(len(audit_host(h)) for h in fleet)

# Output: 68 gaps across 12 hosts

Sixty-eight gaps. Some were critical (no monitoring on DNS). Some were annoying (backup verify not scheduled). All of them were things I’d assumed were covered because I’d set them up once and never checked.

Day one: monitoring coverage

Saturday morning. Coffee. A plan.

The first problem was coverage. Half my hosts were invisible to Prometheus. The fix was straightforward — an Ansible playbook that deploys node_exporter to every host in the fleet, plus a Prometheus config that auto-discovers targets from the Ansible inventory.

One ansible-playbook deploy-node-exporter.yml and every host was exporting metrics. That closed 14 gaps immediately.

The alert rules were next. I wrote 52 rules organized by category: host health (disk, CPU, memory, load), service health (systemd units, Docker containers), network (ping, port checks), backup health (last backup age, repository size), and TLS (certificate expiry). Each rule maps to a remediation playbook through the dispatcher.

# alert-rules/host-health.yml

groups:

- name: host_health

rules:

- alert: DiskSpaceAbove85Pct

expr: (1 - node_filesystem_avail_bytes / node_filesystem_size_bytes) > 0.85

for: 5m

labels:

severity: warning

remediation: cleanup-disk

annotations:

summary: "Disk usage above 85% on {{ $labels.instance }}"

- alert: HostDown

expr: up == 0

for: 2m

labels:

severity: critical

annotations:

summary: "{{ $labels.instance }} is unreachable"

Fifty-two rules sounds like a lot. Most are variations on the same template — change the metric, the threshold, and the remediation label. The entire set fits in four files. Writing them took about two hours. Testing them (intentionally filling a disk, stopping a service, killing a container) took another hour.

By Saturday evening, every host had metrics, every critical condition had an alert rule, and I could see the entire fleet’s health on a single Grafana dashboard. Thirty-one gaps closed.

Day two: remediation and verification

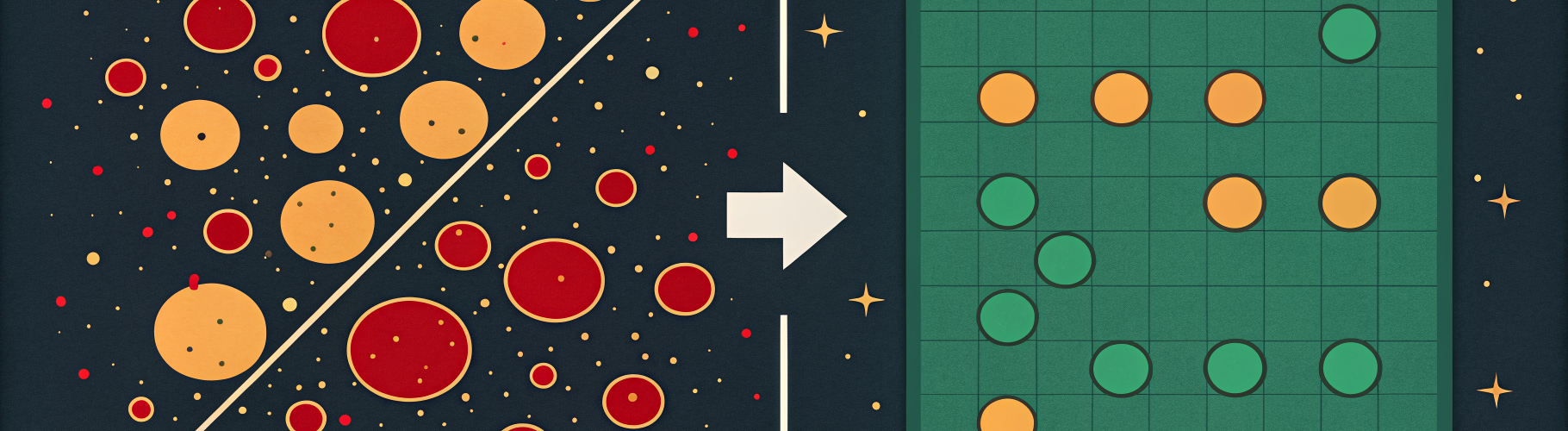

Sunday was about closing the loop. Alerts without automated fixes are just sophisticated ways to wake yourself up.

The remediation bridge — a FastAPI service on the Ansible control node — already existed. I’d built it weeks earlier for container restarts and disk cleanup. What it needed was more mappings. I added playbooks for service restarts, Caddy reloads, backup retries, and DNS health checks. Each one: a short Ansible playbook that fixes exactly one thing.

The health checks were the piece I’d been missing entirely. Prometheus tells you a service is down. It doesn’t tell you the service is up but returning errors, or that DNS is running but not resolving, or that backups are completing but the data is corrupted.

I wrote 25 health checks that run on a schedule and report to Prometheus via a custom exporter. DNS resolution tests. HTTP endpoint checks. Backup integrity verification. Docker container health probes beyond just “is the process running.”

# health-checks/dns-resolution.sh

# Runs every 5 minutes via systemd timer

DOMAINS=("opskern.io" "wiki.wyrdix.net" "grafana.wyrdix.net")

FAILURES=0

for domain in "${DOMAINS[@]}"; do

if ! dig +short "$domain" @10.2.69.27 > /dev/null 2>&1; then

FAILURES=$((FAILURES + 1))

fi

done

echo "dns_resolution_failures $FAILURES" | curl --data-binary @- \

http://localhost:9091/metrics/job/dns_health

Simple. No framework, no dependencies. If DNS can’t resolve my own domains, that’s a problem worth knowing about before users notice.

The test suite

I didn’t trust any of this without tests. If the remediation bridge has a bug, the thing designed to fix problems becomes the problem.

I wrote 128 tests. Unit tests for the dispatcher logic (does it pick the right playbook? does the cooldown work?). Integration tests that fire a fake Alertmanager webhook and verify the correct playbook gets called. End-to-end tests that intentionally break something on a test host and confirm the full loop — detection, dispatch, remediation, notification — completes in under 90 seconds.

def test_container_remediation_e2e():

"""Stop a container, verify auto-restart within 90s."""

ssh(TEST_HOST, "docker stop grafana")

time.sleep(90)

result = ssh(TEST_HOST, "docker inspect -f '{{.State.Running}}' grafana")

assert result.strip() == "true"

# Verify notification was sent

notifications = get_recent_notifications(minutes=5)

assert any("ContainerDown" in n["message"] for n in notifications)

All 128 pass. The test suite runs weekly as a scheduled job. If something regresses, I know before the first real failure hits.

Before and after

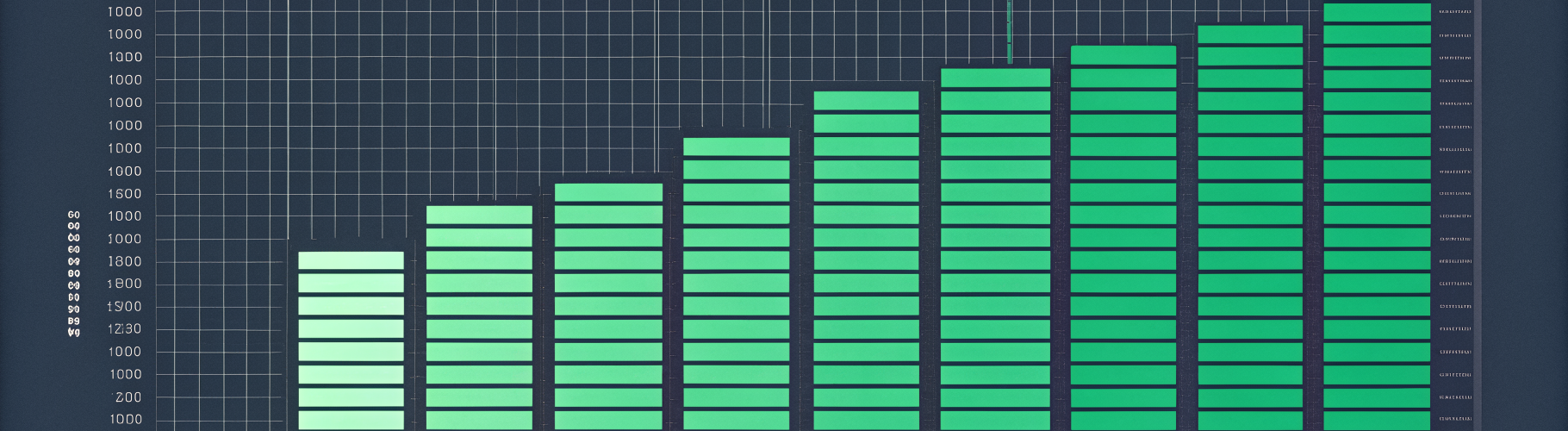

The difference is measurable.

| Metric | Before | After |

|---|---|---|

| Hosts with full monitoring | 4 / 12 | 12 / 12 |

| Alert rules | 9 | 52 |

| Automated remediations | 3 | 17 |

| Health checks | 0 | 25 |

| Test coverage | 0 | 128 tests |

| Gaps found by audit | 68 | 0 |

| 3 AM SSH sessions (last 30 days) | 4 | 0 |

The last row is the one that matters. Four times in the previous month I’d been woken up or interrupted by something that needed a manual fix. In the month since building this out: zero. The automation handled everything. Two container restarts, one disk cleanup, one Caddy reload. All completed in under 60 seconds. I found out about them over morning coffee, reading notification logs.

What this costs

Time: one weekend of focused work. I already had Prometheus and Ansible in place. If you’re starting from scratch, add a day for the monitoring stack.

Hardware: nothing extra. The remediation bridge, health checks, and test runner all live on the existing Ansible control node. Total resource overhead is negligible — a few Python processes, some cron jobs, a couple hundred MB of Prometheus storage.

Complexity: the entire stack is about 2,000 lines across Python, YAML, and bash. No Kubernetes. No service mesh. No cloud APIs. Everything runs on-premises, everything is version controlled, and the whole thing deploys with Ansible.

The stack is open source

Everything described in this post — the remediation bridge, alert rules, health checks, remediation playbooks, test suite, and gap analysis script — is in the ops-kernel-stack repo.

Clone it. Swap in your hostnames. Deploy with Ansible. The README walks through setup in about 30 minutes if you already have Prometheus running.

If you want the full guide — from empty server to self-healing homelab, including Proxmox provisioning, DNS, reverse proxy, backups, and the remediation architecture — the ebook covers every layer.

The free getting started guide gets you from zero to a working Ansible homelab with monitoring and your first automated remediation.

Sixty-eight gaps on a Friday night. Zero by Sunday. The automation doesn’t sleep, doesn’t forget, and doesn’t need coffee. Build it once, and your homelab stops being a second job.

Questions? Open an issue on GitHub or email hello@opskern.io.