Blog

5 Ansible Patterns That Saved My Homelab at 3 AM

Table of Contents

My phone buzzed at 3:14 AM on a Saturday. A container had crashed on my Docker host. By 3:15 AM, it buzzed again: the container was already back up. I rolled over and went back to sleep.

That’s the version of homelab ownership I signed up for. It took a year of breaking things — and a handful of Ansible patterns I now refuse to run without — to get there.

These five patterns came from real failures. Each one started with me SSH’d into a terminal at an unreasonable hour, fixing something that should have fixed itself. Now they do.

1. The Cooldown-Gated Container Restart

What broke: Grafana crashed at 2 AM. The monitoring stack restarted it automatically. Then Grafana crashed again 90 seconds later — OOM kill from a runaway query. The restart fired again. And again. My phone was getting a notification every two minutes.

The manual fix: SSH in, check docker logs, realize it’s a crash loop, stop the auto-restart, fix the config, restart manually.

The pattern: Restart the container once, then back off. If it crashes again within the cooldown window, stop trying and page a human.

# remediation/restart-container.yml

- name: REMEDIATION | Restart containers on {{ target_host }}

hosts: "{{ target_host }}"

tasks:

- name: List stopped containers

command: docker ps -a --filter status=exited --format "{% raw %}{{.Names}}{% endraw %}"

register: stopped

changed_when: false

- name: Restart stopped containers

command: docker start {{ item }}

loop: "{{ stopped.stdout_lines }}"

when: stopped.stdout_lines | length > 0

The cooldown lives in the remediation bridge, not the playbook. The bridge tracks alertname:host pairs and skips dispatch if the last run was within the cooldown window. First crash: auto-fix. Second crash within 10 minutes: your phone rings.

Without the cooldown, you get a restart loop generating notifications until you wake up and intervene. With it, you get one fix attempt and one escalation.

2. The Disk Cleanup That Checks Before It Sweeps

What broke: Disk hit 92% on my DNS server at 1 AM. The alert fired. I SSH’d in the next morning, ran du -sh /*, found 4 GB of old journal logs, ran journalctl --vacuum-time=14d, and went on with my day.

Three minutes of work. Completely predictable. No reason a computer shouldn’t do it.

The pattern: Check disk usage, clean journal logs, prune Docker artifacts if Docker is present, clear the apt cache. Report before-and-after numbers so you can see what was reclaimed.

# remediation/cleanup-disk.yml (abbreviated)

- name: REMEDIATION | Cleanup disk on {{ target_host }}

hosts: "{{ target_host }}"

become: true

tasks:

- name: PRE-FLIGHT | Check current disk usage

command: df -h /

register: disk_before

changed_when: false

- name: Vacuum journal logs older than 14 days

command: journalctl --vacuum-time=14d

- name: Check if Docker is present

stat: path=/var/run/docker.sock

register: docker_sock

- name: Prune stopped containers and dangling images

shell: docker container prune -f && docker image prune -f

when: docker_sock.stat.exists

The stat check on the Docker socket is important. This playbook runs on every host in the fleet — DNS servers, LXC containers, Docker hosts. Without that guard, it fails on hosts without Docker. Check first, act conditionally.

The production version also runs apt autoclean and reports before/after disk usage in the notification. Typical cleanup: 1-3 GB reclaimed, no human involved.

3. The enabled: true Trap (and the Pattern That Prevents It)

What broke: I deployed Promtail to a new host. Playbook exited cleanly. I checked Loki the next day — no logs from that host. SSH’d in: Promtail wasn’t running. It was enabled (set to start at boot) but never actually started.

The playbook had enabled: true without state: started. Ansible set up the systemd unit, enabled it for boot, and moved on. The service sat there, configured but idle, until the next reboot.

The pattern: Always pair enabled with state: started. Always validate that the service is actually running after the task completes.

- name: Enable and start Promtail

ansible.builtin.systemd:

name: promtail

enabled: true

state: started

daemon_reload: true

when: not ansible_check_mode

- name: Verify Promtail is running

command: systemctl is-active promtail

register: promtail_status

failed_when: promtail_status.stdout != 'active'

changed_when: false

This is one of the most common Ansible mistakes. It’s subtle because everything looks right — the playbook succeeds, the unit file exists, systemctl is-enabled returns enabled. You only notice when the service isn’t doing its job, which might be hours or days later.

The validation task makes the failure immediate and loud instead of silent and delayed.

4. The Pre-Maintenance Silence

What broke: I rebooted my Proxmox host for a kernel update. Planned, expected, routine. My phone exploded. HostDown for every VM on that node. ContainerDown for every container on those VMs. DiskSpaceWarning because last-known metrics showed elevated usage. Twelve notifications in 90 seconds for one planned reboot.

The manual fix: Mute your phone before maintenance. Remember to unmute after. (You will forget.)

The pattern: Create an Alertmanager silence before maintenance starts. Expire it when you’re done. Automate both.

- name: MAINTENANCE | Silence alerts for target host

ansible.builtin.uri:

url: "http://{{ alertmanager_host }}:9093/api/v2/silences"

method: POST

body_format: json

body:

matchers:

- name: instance

value: "{{ target_host }}"

startsAt: "{{ now(utc=true) | string }}"

endsAt: "{{ (now(utc=true) + duration('2h')) | string }}"

createdBy: "ansible"

comment: "Planned maintenance via playbook"

register: silence_result

The silence has a 2-hour expiration. If the maintenance takes longer, Alertmanager starts sending again — which is what you want. An indefinite silence that gets forgotten is the same as having no monitoring.

I add this as a pre-task in any playbook that reboots a host or stops services. The matching post-task expires the silence early when the work is done. No manual muting, no forgotten silences, no 3 AM notification storms from planned work.

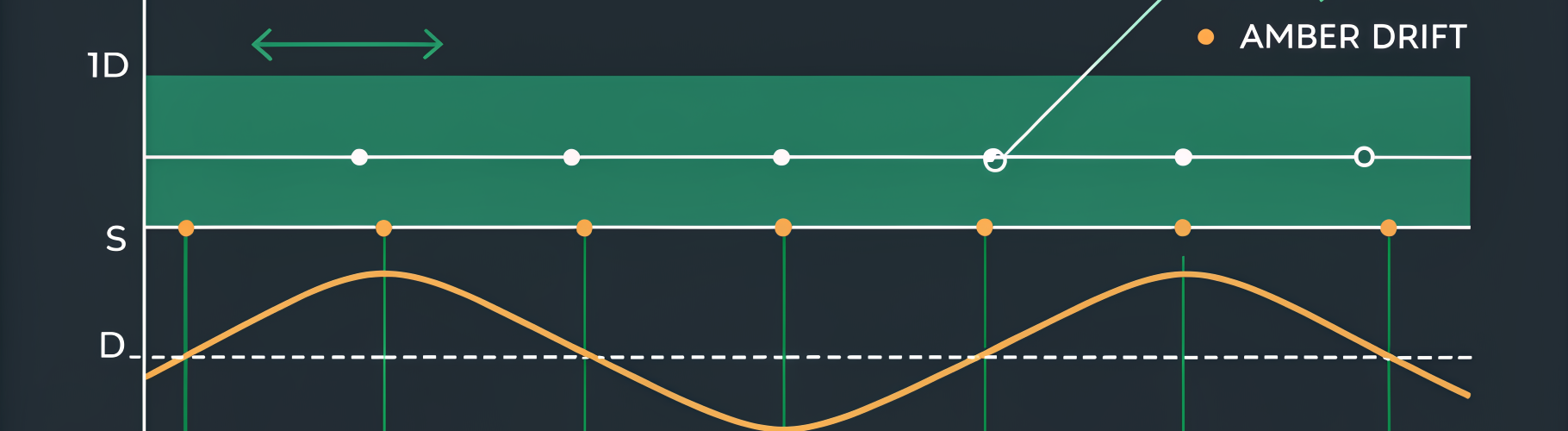

5. The Weekly Drift Check

What broke: I SSH’d into my Docker host to debug a Caddy issue. Changed one line in the Caddyfile. It worked. I forgot to put it in the Ansible playbook. Two months later, a full fleet redeploy overwrote my change, and three services lost their reverse proxy config at once.

The pattern: Run every playbook in --check mode on a weekly schedule. Any reported changes mean drift — the live state doesn’t match what Ansible expects. Investigate before the drift bites you.

#!/bin/bash

# drift-check.sh — runs weekly via systemd timer

CHANGES=0

for playbook in ~/ansible/playbooks/*.yml; do

output=$(ansible-playbook "$playbook" --check 2>&1)

if echo "$output" | grep -q "changed=[^0]"; then

CHANGES=$((CHANGES + 1))

fi

done

if [ $CHANGES -gt 0 ]; then

curl -s -X POST "http://ntfy-host:8081/homelab" \

-H "Title: Drift detected" \

-H "Tags: warning" \

-d "$CHANGES playbook(s) reported changes."

fi

Zero changes means your infrastructure matches your code. Non-zero means something moved without Ansible knowing. Most of the time it’s benign — a minor version bumped, a timestamp changed. Occasionally it’s the forgotten SSH edit that’s about to get overwritten.

The check runs on Sundays at 4 AM. If drift exists, I get a notification. I review it Monday morning. The drift either gets codified (added to the playbook) or corrected (playbook re-applied). Either way, the gap between “what Ansible thinks” and “what actually exists” stays small.

The Stack Behind the Patterns

All five patterns use the same open-source tools: Prometheus for detection, Alertmanager for routing, a Python remediation bridge (~200 lines) for dispatch, and Ansible for the actual fixes. Notifications go through ntfy (self-hosted push notifications) and Slack.

The remediation bridge maps alert names to playbooks in a YAML file. Adding a new automated fix is three lines of YAML and a playbook — no code changes, no restarts.

The entire stack runs on-premises. No cloud dependencies, no API keys, no tokens. When the internet goes down — maybe because the thing that broke is the router — the automation still works.

What I’d Tell Myself a Year Ago

Start with pattern 3. The enabled: true trap will bite you on your first deployment, and the fix is two lines. Then add the drift check — it costs nothing and catches problems before they become incidents.

The container restart and disk cleanup patterns need the remediation bridge, which takes an afternoon to set up. The return on that afternoon is every 3 AM page you never have to answer.

The pre-maintenance silence is the one you’ll appreciate most during your next Proxmox upgrade. Trust me.

If you want the full walkthrough — from zero to a self-healing homelab — the companion guide covers the complete stack, including the remediation bridge setup, alert rules, and backup automation.

Get the free getting started guide — it takes you from an empty Ansible directory to a working self-healing homelab.

Questions or war stories? Open an issue on GitHub or email hello@opskern.io.